A Family of Objects That All Understand and Respond to the Same Methods Is Defined by a:

Statistics is the subject that concerns the drove, arrangement, analysis, interpretation, and presentation of information.[one] [2] [3] In applying statistics to a scientific, industrial, or social trouble, it is conventional to brainstorm with a statistical population or a statistical model to exist studied. Populations tin can exist diverse groups of people or objects such as "all people living in a land" or "every atom composing a crystal". Statistics deals with every attribute of data, including the planning of information collection in terms of the design of surveys and experiments.[iv]

When census data cannot be collected, statisticians collect data by developing specific experiment designs and survey samples. Representative sampling assures that inferences and conclusions can reasonably extend from the sample to the population as a whole. An experimental report involves taking measurements of the arrangement under study, manipulating the arrangement, and and so taking additional measurements using the same procedure to determine if the manipulation has modified the values of the measurements. In contrast, an observational written report does not involve experimental manipulation.

Two main statistical methods are used in data analysis: descriptive statistics, which summarize data from a sample using indexes such as the mean or standard divergence, and inferential statistics, which draw conclusions from data that are subject to random variation (e.thousand., observational errors, sampling variation).[5] Descriptive statistics are nigh often concerned with two sets of properties of a distribution (sample or population): key trend (or location) seeks to characterize the distribution'due south central or typical value, while dispersion (or variability) characterizes the extent to which members of the distribution depart from its center and each other. Inferences on mathematical statistics are made under the framework of probability theory, which deals with the analysis of random phenomena.

A standard statistical process involves the collection of data leading to test of the relationship between two statistical data sets, or a data set and synthetic data drawn from an idealized model. A hypothesis is proposed for the statistical human relationship between the ii data sets, and this is compared as an alternative to an idealized null hypothesis of no human relationship between 2 data sets. Rejecting or disproving the null hypothesis is done using statistical tests that quantify the sense in which the cypher tin can exist proven false, given the information that are used in the exam. Working from a null hypothesis, two basic forms of error are recognized: Type I errors (goose egg hypothesis is falsely rejected giving a "imitation positive") and Type Ii errors (null hypothesis fails to be rejected and an actual human relationship between populations is missed giving a "faux negative").[6] Multiple problems have come to exist associated with this framework, ranging from obtaining a sufficient sample size to specifying an acceptable null hypothesis.[5]

Measurement processes that generate statistical data are also bailiwick to error. Many of these errors are classified equally random (noise) or systematic (bias), but other types of errors (e.chiliad., blunder, such as when an analyst reports incorrect units) can also occur. The presence of missing data or censoring may result in biased estimates and specific techniques have been adult to address these problems.

Introduction [edit]

Statistics is a mathematical body of scientific discipline that pertains to the collection, analysis, interpretation or caption, and presentation of data,[7] or as a branch of mathematics.[8] Some consider statistics to be a singled-out mathematical science rather than a branch of mathematics. While many scientific investigations brand use of information, statistics is concerned with the use of data in the context of uncertainty and decision making in the confront of dubiety.[9] [x]

In applying statistics to a problem, information technology is common do to start with a population or process to be studied. Populations tin be diverse topics such as "all people living in a country" or "every cantlet composing a crystal". Ideally, statisticians compile data about the entire population (an functioning called census). This may be organized by governmental statistical institutes. Descriptive statistics tin be used to summarize the population data. Numerical descriptors include mean and standard deviation for continuous information (like income), while frequency and percentage are more useful in terms of describing chiselled data (similar education).

When a census is not feasible, a chosen subset of the population called a sample is studied. Once a sample that is representative of the population is determined, data is nerveless for the sample members in an observational or experimental setting. Again, descriptive statistics can be used to summarize the sample data. However, drawing the sample contains an chemical element of randomness; hence, the numerical descriptors from the sample are also prone to uncertainty. To depict meaningful conclusions about the entire population, inferential statistics is needed. Information technology uses patterns in the sample data to draw inferences about the population represented while accounting for randomness. These inferences may take the class of answering yep/no questions nearly the data (hypothesis testing), estimating numerical characteristics of the data (estimation), describing associations within the data (correlation), and modeling relationships within the data (for example, using regression analysis). Inference can extend to forecasting, prediction, and estimation of unobserved values either in or associated with the population being studied. It tin can include extrapolation and interpolation of time serial or spatial data, and data mining.

Mathematical statistics [edit]

Mathematical statistics is the application of mathematics to statistics. Mathematical techniques used for this include mathematical analysis, linear algebra, stochastic analysis, differential equations, and mensurate-theoretic probability theory.[11] [12]

History [edit]

The early writings on statistical inference date back to Arab mathematicians and cryptographers, during the Islamic Gilded Historic period between the 8th and 13th centuries. Al-Khalil (717–786) wrote the Book of Cryptographic Messages, which contains the first use of permutations and combinations, to list all possible Arabic words with and without vowels.[xiii] In his volume, Manuscript on Deciphering Cryptographic Messages, Al-Kindi gave a detailed clarification of how to use frequency analysis to decipher encrypted letters. Al-Kindi also fabricated the primeval known apply of statistical inference, while he and later Arab cryptographers developed the early on statistical methods for decoding encrypted messages. Ibn Adlan (1187–1268) afterwards fabricated an important contribution, on the use of sample size in frequency analysis.[13]

The earliest European writing on statistics dates back to 1663, with the publication of Natural and Political Observations upon the Bills of Bloodshed by John Graunt.[xiv] Early applications of statistical thinking revolved effectually the needs of states to base of operations policy on demographic and economic data, hence its stat- etymology. The scope of the discipline of statistics broadened in the early 19th century to include the collection and assay of information in general. Today, statistics is widely employed in authorities, business, and natural and social sciences.

The mathematical foundations of modern statistics were laid in the 17th century with the evolution of the probability theory by Gerolamo Cardano, Blaise Pascal and Pierre de Fermat. Mathematical probability theory arose from the written report of games of chance, although the concept of probability was already examined in medieval police force and past philosophers such as Juan Caramuel.[xv] The method of least squares was kickoff described by Adrien-Marie Legendre in 1805.

The mod field of statistics emerged in the belatedly 19th and early on 20th century in three stages.[16] The starting time wave, at the turn of the century, was led by the piece of work of Francis Galton and Karl Pearson, who transformed statistics into a rigorous mathematical discipline used for assay, not just in science, but in manufacture and politics likewise. Galton's contributions included introducing the concepts of standard divergence, correlation, regression assay and the application of these methods to the study of the multifariousness of homo characteristics—superlative, weight, eyelash length amidst others.[17] Pearson developed the Pearson product-moment correlation coefficient, defined as a production-moment,[eighteen] the method of moments for the fitting of distributions to samples and the Pearson distribution, amongst many other things.[xix] Galton and Pearson founded Biometrika as the first journal of mathematical statistics and biostatistics (so chosen biometry), and the latter founded the world'due south first university statistics department at University Higher London.[xx]

Ronald Fisher coined the term nil hypothesis during the Lady tasting tea experiment, which "is never proved or established, but is possibly disproved, in the course of experimentation".[21] [22]

The second wave of the 1910s and 20s was initiated by William Sealy Gosset, and reached its culmination in the insights of Ronald Fisher, who wrote the textbooks that were to define the bookish discipline in universities around the world. Fisher's most of import publications were his 1918 seminal paper The Correlation betwixt Relatives on the Assumption of Mendelian Inheritance (which was the first to use the statistical term, variance), his archetype 1925 work Statistical Methods for Inquiry Workers and his 1935 The Design of Experiments,[23] [24] [25] where he developed rigorous design of experiments models. He originated the concepts of sufficiency, ancillary statistics, Fisher's linear discriminator and Fisher information.[26] In his 1930 book The Genetical Theory of Natural Option, he applied statistics to various biological concepts such as Fisher'due south principle[27] (which A. W. F. Edwards called "probably the virtually celebrated argument in evolutionary biology") and Fisherian runaway,[28] [29] [30] [31] [32] [33] a concept in sexual selection near a positive feedback delinquent outcome plant in evolution.

The final wave, which mainly saw the refinement and expansion of earlier developments, emerged from the collaborative work between Egon Pearson and Jerzy Neyman in the 1930s. They introduced the concepts of "Type Two" fault, power of a examination and conviction intervals. Jerzy Neyman in 1934 showed that stratified random sampling was in general a amend method of estimation than purposive (quota) sampling.[34]

Today, statistical methods are practical in all fields that involve decision making, for making accurate inferences from a collated body of data and for making decisions in the face of doubt based on statistical methodology. The use of modern computers has expedited big-scale statistical computations and has as well made possible new methods that are impractical to perform manually. Statistics continues to be an surface area of agile research for case on the trouble of how to clarify large data.[35]

Statistical data [edit]

Information drove [edit]

Sampling [edit]

When full census data cannot be collected, statisticians collect sample data by developing specific experiment designs and survey samples. Statistics itself also provides tools for prediction and forecasting through statistical models.

To use a sample equally a guide to an unabridged population, it is important that it truly represents the overall population. Representative sampling assures that inferences and conclusions tin safely extend from the sample to the population every bit a whole. A major trouble lies in determining the extent that the sample chosen is actually representative. Statistics offers methods to estimate and right for any bias within the sample and information collection procedures. There are besides methods of experimental design for experiments that can lessen these issues at the outset of a report, strengthening its adequacy to discern truths about the population.

Sampling theory is office of the mathematical bailiwick of probability theory. Probability is used in mathematical statistics to study the sampling distributions of sample statistics and, more generally, the properties of statistical procedures. The use of whatever statistical method is valid when the system or population under consideration satisfies the assumptions of the method. The difference in indicate of view between classic probability theory and sampling theory is, roughly, that probability theory starts from the given parameters of a full population to deduce probabilities that pertain to samples. Statistical inference, nevertheless, moves in the opposite direction—inductively inferring from samples to the parameters of a larger or total population.

Experimental and observational studies [edit]

A common goal for a statistical research project is to investigate causality, and in particular to draw a decision on the effect of changes in the values of predictors or contained variables on dependent variables. There are two major types of causal statistical studies: experimental studies and observational studies. In both types of studies, the effect of differences of an independent variable (or variables) on the behavior of the dependent variable are observed. The difference between the two types lies in how the study is actually conducted. Each can be very effective. An experimental study involves taking measurements of the system under study, manipulating the organisation, and so taking additional measurements using the same procedure to make up one's mind if the manipulation has modified the values of the measurements. In contrast, an observational study does not involve experimental manipulation. Instead, data are gathered and correlations between predictors and response are investigated. While the tools of data analysis work best on data from randomized studies, they are as well practical to other kinds of information—like natural experiments and observational studies[36]—for which a statistician would utilize a modified, more than structured estimation method (e.g., Difference in differences interpretation and instrumental variables, amid many others) that produce consistent estimators.

Experiments [edit]

The bones steps of a statistical experiment are:

- Planning the research, including finding the number of replicates of the study, using the following information: preliminary estimates regarding the size of treatment effects, alternative hypotheses, and the estimated experimental variability. Consideration of the choice of experimental subjects and the ethics of research is necessary. Statisticians recommend that experiments compare (at to the lowest degree) ane new handling with a standard treatment or control, to allow an unbiased approximate of the deviation in treatment furnishings.

- Pattern of experiments, using blocking to reduce the influence of misreckoning variables, and randomized consignment of treatments to subjects to allow unbiased estimates of treatment furnishings and experimental fault. At this stage, the experimenters and statisticians write the experimental protocol that volition guide the performance of the experiment and which specifies the primary assay of the experimental data.

- Performing the experiment post-obit the experimental protocol and analyzing the data following the experimental protocol.

- Further examining the data gear up in secondary analyses, to propose new hypotheses for futurity study.

- Documenting and presenting the results of the study.

Experiments on human beliefs accept special concerns. The famous Hawthorne study examined changes to the working environment at the Hawthorne establish of the Western Electric Visitor. The researchers were interested in determining whether increased illumination would increment the productivity of the assembly line workers. The researchers first measured the productivity in the plant, then modified the illumination in an area of the plant and checked if the changes in illumination affected productivity. It turned out that productivity indeed improved (under the experimental conditions). However, the written report is heavily criticized today for errors in experimental procedures, specifically for the lack of a control grouping and incomprehension. The Hawthorne upshot refers to finding that an effect (in this case, worker productivity) changed due to observation itself. Those in the Hawthorne study became more than productive non considering the lighting was changed only because they were beingness observed.[37]

Observational report [edit]

An example of an observational written report is one that explores the association between smoking and lung cancer. This type of study typically uses a survey to collect observations well-nigh the area of involvement and and then performs statistical analysis. In this case, the researchers would collect observations of both smokers and non-smokers, perhaps through a cohort study, and then look for the number of cases of lung cancer in each group.[38] A case-control study is another type of observational study in which people with and without the result of interest (e.m. lung cancer) are invited to participate and their exposure histories are collected.

Types of information [edit]

Various attempts have been made to produce a taxonomy of levels of measurement. The psychophysicist Stanley Smith Stevens defined nominal, ordinal, interval, and ratio scales. Nominal measurements do not take meaningful rank order amongst values, and permit whatsoever ane-to-1 (injective) transformation. Ordinal measurements accept imprecise differences betwixt consecutive values, but take a meaningful order to those values, and permit any order-preserving transformation. Interval measurements have meaningful distances between measurements divers, but the zero value is capricious (every bit in the case with longitude and temperature measurements in Celsius or Fahrenheit), and permit any linear transformation. Ratio measurements have both a meaningful zero value and the distances between dissimilar measurements defined, and let whatsoever rescaling transformation.

Because variables conforming simply to nominal or ordinal measurements cannot be reasonably measured numerically, sometimes they are grouped together as categorical variables, whereas ratio and interval measurements are grouped together as quantitative variables, which can be either discrete or continuous, due to their numerical nature. Such distinctions can ofttimes be loosely correlated with information blazon in computer science, in that dichotomous categorical variables may exist represented with the Boolean information type, polytomous chiselled variables with arbitrarily assigned integers in the integral data type, and continuous variables with the real data type involving floating bespeak ciphering. Only the mapping of computer science data types to statistical data types depends on which categorization of the latter is existence implemented.

Other categorizations have been proposed. For example, Mosteller and Tukey (1977)[39] distinguished grades, ranks, counted fractions, counts, amounts, and balances. Nelder (1990)[twoscore] described continuous counts, continuous ratios, count ratios, and chiselled modes of data. (Meet too: Chrisman (1998),[41] van den Berg (1991).[42])

The outcome of whether or not it is appropriate to utilise different kinds of statistical methods to information obtained from different kinds of measurement procedures is complicated by issues concerning the transformation of variables and the precise interpretation of enquiry questions. "The relationship between the information and what they depict merely reflects the fact that certain kinds of statistical statements may take truth values which are non invariant nether some transformations. Whether or not a transformation is sensible to contemplate depends on the question one is trying to answer."[43] : 82

Methods [edit]

Descriptive statistics [edit]

A descriptive statistic (in the count noun sense) is a summary statistic that quantitatively describes or summarizes features of a collection of data,[44] while descriptive statistics in the mass substantive sense is the process of using and analyzing those statistics. Descriptive statistics is distinguished from inferential statistics (or inductive statistics), in that descriptive statistics aims to summarize a sample, rather than use the data to learn about the population that the sample of data is thought to represent.

Inferential statistics [edit]

Statistical inference is the process of using data analysis to deduce properties of an underlying probability distribution.[45] Inferential statistical assay infers backdrop of a population, for example by testing hypotheses and deriving estimates. It is assumed that the observed data set is sampled from a larger population. Inferential statistics can be contrasted with descriptive statistics. Descriptive statistics is solely concerned with properties of the observed data, and information technology does not balance on the assumption that the data come from a larger population.

Terminology and theory of inferential statistics [edit]

Statistics, estimators and pivotal quantities [edit]

Consider independent identically distributed (IID) random variables with a given probability distribution: standard statistical inference and interpretation theory defines a random sample as the random vector given by the column vector of these IID variables.[46] The population being examined is described by a probability distribution that may have unknown parameters.

A statistic is a random variable that is a function of the random sample, but not a part of unknown parameters. The probability distribution of the statistic, though, may accept unknown parameters. Consider now a office of the unknown parameter: an estimator is a statistic used to estimate such function. Unremarkably used estimators include sample hateful, unbiased sample variance and sample covariance.

A random variable that is a office of the random sample and of the unknown parameter, but whose probability distribution does non depend on the unknown parameter is chosen a pivotal quantity or pivot. Widely used pivots include the z-score, the chi square statistic and Student's t-value.

Betwixt two estimators of a given parameter, the one with lower mean squared error is said to be more efficient. Furthermore, an reckoner is said to be unbiased if its expected value is equal to the true value of the unknown parameter being estimated, and asymptotically unbiased if its expected value converges at the limit to the true value of such parameter.

Other desirable properties for estimators include: UMVUE estimators that have the everyman variance for all possible values of the parameter to be estimated (this is usually an easier holding to verify than efficiency) and consistent estimators which converges in probability to the true value of such parameter.

This still leaves the question of how to obtain estimators in a given situation and comport the computation, several methods have been proposed: the method of moments, the maximum likelihood method, the least squares method and the more contempo method of estimating equations.

Nil hypothesis and alternative hypothesis [edit]

Interpretation of statistical information can oftentimes involve the development of a cipher hypothesis which is usually (only not necessarily) that no human relationship exists amidst variables or that no change occurred over fourth dimension.[47] [48]

The best illustration for a novice is the predicament encountered by a criminal trial. The null hypothesis, H0, asserts that the defendant is innocent, whereas the alternative hypothesis, Hane, asserts that the defendant is guilty. The indictment comes because of suspicion of the guilt. The H0 (status quo) stands in opposition to Hi and is maintained unless Hi is supported by bear witness "beyond a reasonable doubt". Even so, "failure to pass up H0" in this case does not imply innocence, but just that the prove was insufficient to captive. So the jury does not necessarily accept H0 but fails to reject H0. While i can not "evidence" a null hypothesis, one tin can test how close it is to being true with a power test, which tests for type Two errors.

What statisticians call an alternative hypothesis is but a hypothesis that contradicts the null hypothesis.

Error [edit]

Working from a cipher hypothesis, two broad categories of error are recognized:

- Blazon I errors where the null hypothesis is falsely rejected, giving a "fake positive".

- Type Ii errors where the null hypothesis fails to be rejected and an bodily difference between populations is missed, giving a "imitation negative".

Standard deviation refers to the extent to which individual observations in a sample differ from a fundamental value, such every bit the sample or population hateful, while Standard mistake refers to an guess of deviation betwixt sample mean and population hateful.

A statistical error is the corporeality by which an observation differs from its expected value. A residual is the amount an observation differs from the value the computer of the expected value assumes on a given sample (also called prediction).

Mean squared error is used for obtaining efficient estimators, a widely used class of estimators. Root mean foursquare mistake is simply the square root of mean squared mistake.

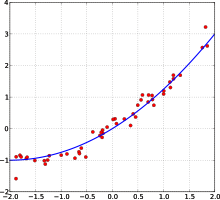

A to the lowest degree squares fit: in red the points to be fitted, in blue the fitted line.

Many statistical methods seek to minimize the balance sum of squares, and these are chosen "methods of least squares" in contrast to To the lowest degree absolute deviations. The latter gives equal weight to small and big errors, while the former gives more than weight to big errors. Residuum sum of squares is besides differentiable, which provides a handy property for doing regression. Least squares practical to linear regression is chosen ordinary least squares method and least squares applied to nonlinear regression is called non-linear least squares. Also in a linear regression model the not deterministic office of the model is called error term, disturbance or more simply noise. Both linear regression and non-linear regression are addressed in polynomial least squares, which also describes the variance in a prediction of the dependent variable (y axis) as a function of the independent variable (x axis) and the deviations (errors, noise, disturbances) from the estimated (fitted) curve.

Measurement processes that generate statistical data are besides subject to error. Many of these errors are classified as random (noise) or systematic (bias), just other types of errors (e.m., blunder, such every bit when an analyst reports incorrect units) can also be important. The presence of missing data or censoring may consequence in biased estimates and specific techniques have been developed to address these issues.[49]

Interval estimation [edit]

Conviction intervals: the red line is true value for the hateful in this example, the blue lines are random confidence intervals for 100 realizations.

Almost studies simply sample role of a population, so results don't fully represent the whole population. Any estimates obtained from the sample merely approximate the population value. Confidence intervals allow statisticians to express how closely the sample estimate matches the true value in the whole population. Frequently they are expressed as 95% conviction intervals. Formally, a 95% confidence interval for a value is a range where, if the sampling and assay were repeated under the same atmospheric condition (yielding a different dataset), the interval would include the truthful (population) value in 95% of all possible cases. This does not imply that the probability that the truthful value is in the confidence interval is 95%. From the frequentist perspective, such a merits does not even make sense, as the true value is not a random variable. Either the truthful value is or is not within the given interval. Withal, it is truthful that, earlier whatsoever data are sampled and given a plan for how to construct the conviction interval, the probability is 95% that the withal-to-be-calculated interval volition cover the true value: at this point, the limits of the interval are yet-to-be-observed random variables. One approach that does yield an interval that tin exist interpreted as having a given probability of containing the true value is to utilise a credible interval from Bayesian statistics: this approach depends on a different way of interpreting what is meant by "probability", that is as a Bayesian probability.

In principle confidence intervals tin can exist symmetrical or asymmetrical. An interval can be asymmetrical because it works as lower or upper jump for a parameter (left-sided interval or right sided interval), but it can also be asymmetrical because the two sided interval is congenital violating symmetry around the estimate. Sometimes the bounds for a conviction interval are reached asymptotically and these are used to approximate the truthful bounds.

Significance [edit]

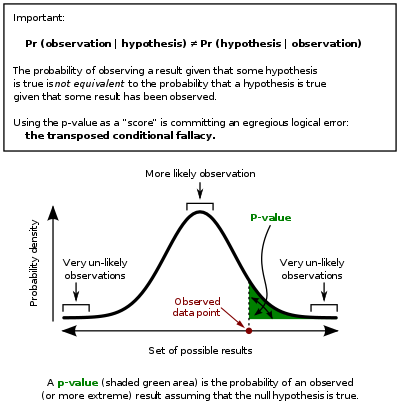

Statistics rarely give a simple Yes/No type respond to the question under analysis. Interpretation often comes downwardly to the level of statistical significance applied to the numbers and often refers to the probability of a value accurately rejecting the null hypothesis (sometimes referred to as the p-value).

In this graph the black line is probability distribution for the test statistic, the disquisitional region is the gear up of values to the right of the observed data bespeak (observed value of the examination statistic) and the p-value is represented by the green area.

The standard approach[46] is to test a zippo hypothesis against an alternative hypothesis. A critical region is the set of values of the figurer that leads to refuting the nil hypothesis. The probability of blazon I fault is therefore the probability that the figurer belongs to the critical region given that naught hypothesis is truthful (statistical significance) and the probability of blazon 2 error is the probability that the estimator doesn't belong to the disquisitional region given that the alternative hypothesis is true. The statistical power of a exam is the probability that information technology correctly rejects the zero hypothesis when the null hypothesis is imitation.

Referring to statistical significance does not necessarily hateful that the overall result is significant in real earth terms. For example, in a large study of a drug information technology may exist shown that the drug has a statistically significant but very small-scale benign upshot, such that the drug is unlikely to help the patient noticeably.

Although in principle the adequate level of statistical significance may be discipline to debate, the significance level is the largest p-value that allows the test to reject the null hypothesis. This test is logically equivalent to saying that the p-value is the probability, assuming the null hypothesis is true, of observing a outcome at least equally extreme as the exam statistic. Therefore, the smaller the significance level, the lower the probability of committing type I error.

Some problems are usually associated with this framework (See criticism of hypothesis testing):

- A difference that is highly statistically significant can yet be of no practical significance, but it is possible to properly formulate tests to account for this. One response involves going beyond reporting only the significance level to include the p-value when reporting whether a hypothesis is rejected or accustomed. The p-value, nevertheless, does non signal the size or importance of the observed effect and can too seem to exaggerate the importance of minor differences in big studies. A meliorate and increasingly common approach is to report confidence intervals. Although these are produced from the same calculations as those of hypothesis tests or p-values, they describe both the size of the effect and the uncertainty surrounding it.

- Fallacy of the transposed conditional, aka prosecutor'due south fallacy: criticisms ascend because the hypothesis testing arroyo forces one hypothesis (the zippo hypothesis) to be favored, since what is being evaluated is the probability of the observed event given the naught hypothesis and not probability of the null hypothesis given the observed result. An alternative to this approach is offered by Bayesian inference, although it requires establishing a prior probability.[50]

- Rejecting the zip hypothesis does not automatically prove the alternative hypothesis.

- As everything in inferential statistics it relies on sample size, and therefore under fatty tails p-values may exist seriously mis-computed.[ clarification needed ]

Examples [edit]

Some well-known statistical tests and procedures are:

- Analysis of variance (ANOVA)

- Chi-squared test

- Correlation

- Cistron analysis

- Mann–Whitney U

- Mean foursquare weighted deviation (MSWD)

- Pearson product-moment correlation coefficient

- Regression analysis

- Spearman'southward rank correlation coefficient

- Student'due south t-exam

- Time series analysis

- Conjoint Analysis

Exploratory data analysis [edit]

Exploratory data analysis (EDA) is an arroyo to analyzing data sets to summarize their master characteristics, often with visual methods. A statistical model can exist used or non, but primarily EDA is for seeing what the data can tell us beyond the formal modeling or hypothesis testing job.

Misuse [edit]

Misuse of statistics tin can produce subtle but serious errors in description and interpretation—subtle in the sense that even experienced professionals brand such errors, and serious in the sense that they tin lead to devastating conclusion errors. For instance, social policy, medical practice, and the reliability of structures like bridges all rely on the proper use of statistics.

Even when statistical techniques are correctly applied, the results can be hard to interpret for those lacking expertise. The statistical significance of a trend in the data—which measures the extent to which a tendency could be caused by random variation in the sample—may or may not concord with an intuitive sense of its significance. The ready of basic statistical skills (and skepticism) that people need to bargain with data in their everyday lives properly is referred to as statistical literacy.

There is a full general perception that statistical knowledge is all-too-frequently intentionally misused by finding ways to interpret just the data that are favorable to the presenter.[51] A mistrust and misunderstanding of statistics is associated with the quotation, "There are iii kinds of lies: lies, damned lies, and statistics". Misuse of statistics can be both inadvertent and intentional, and the book How to Lie with Statistics,[51] past Darrell Huff, outlines a range of considerations. In an effort to shed calorie-free on the use and misuse of statistics, reviews of statistical techniques used in detail fields are conducted (e.1000. Warne, Lazo, Ramos, and Ritter (2012)).[52]

Means to avoid misuse of statistics include using proper diagrams and avoiding bias.[53] Misuse can occur when conclusions are overgeneralized and claimed to be representative of more than than they actually are, oft past either deliberately or unconsciously overlooking sampling bias.[54] Bar graphs are arguably the easiest diagrams to use and empathize, and they can be made either by hand or with simple computer programs.[53] Unfortunately, most people do not await for bias or errors, and so they are not noticed. Thus, people may ofttimes believe that something is true even if it is not well represented.[54] To brand information gathered from statistics believable and accurate, the sample taken must be representative of the whole.[55] According to Huff, "The dependability of a sample tin can be destroyed by [bias]... allow yourself some degree of skepticism."[56]

To assist in the understanding of statistics Huff proposed a series of questions to be asked in each example:[51]

- Who says and so? (Does he/she have an axe to grind?)

- How does he/she know? (Does he/she have the resources to know the facts?)

- What's missing? (Does he/she give us a complete picture?)

- Did someone change the bailiwick? (Does he/she offer u.s.a. the correct reply to the wrong problem?)

- Does information technology make sense? (Is his/her conclusion logical and consequent with what nosotros already know?)

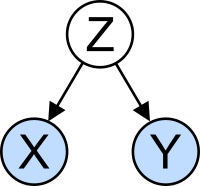

The misreckoning variable trouble: X and Y may be correlated, non because there is causal relationship betwixt them, but because both depend on a third variable Z. Z is called a confounding factor.

Misinterpretation: correlation [edit]

The concept of correlation is specially noteworthy for the potential confusion it can cause. Statistical analysis of a data set ofttimes reveals that two variables (properties) of the population under consideration tend to vary together, as if they were connected. For instance, a study of annual income that also looks at age of death might find that poor people tend to have shorter lives than flush people. The two variables are said to be correlated; however, they may or may not be the cause of i another. The correlation phenomena could be acquired by a 3rd, previously unconsidered phenomenon, called a lurking variable or misreckoning variable. For this reason, there is no way to immediately infer the beingness of a causal relationship betwixt the two variables.

Applications [edit]

Applied statistics, theoretical statistics and mathematical statistics [edit]

Applied statistics, sometimes referred to as Statistical science, [57] comprises descriptive statistics and the application of inferential statistics.[58] [59] Theoretical statistics concerns the logical arguments underlying justification of approaches to statistical inference, as well as encompassing mathematical statistics. Mathematical statistics includes not only the manipulation of probability distributions necessary for deriving results related to methods of estimation and inference, but also various aspects of computational statistics and the design of experiments.

Statistical consultants tin help organizations and companies that don't have in-firm expertise relevant to their particular questions.

Automobile learning and data mining [edit]

Machine learning models are statistical and probabilistic models that capture patterns in the data through employ of computational algorithms.

Statistics in academia [edit]

Statistics is applicable to a wide variety of bookish disciplines, including natural and social sciences, government, and business. Business statistics applies statistical methods in econometrics, auditing and production and operations, including services improvement and marketing inquiry.[60] A study of ii journals in tropical biology found that the 12 most frequent statistical tests are: Analysis of Variance (ANOVA), Chi-Square Test, Student's T Exam, Linear Regression, Pearson's Correlation Coefficient, Isle of man-Whitney U Test, Kruskal-Wallis Exam, Shannon's Diversity Index, Tukey'due south Examination, Cluster Analysis, Spearman's Rank Correlation Test and Principal Component Analysis.[61]

A typical statistics form covers descriptive statistics, probability, binomial and normal distributions, examination of hypotheses and conviction intervals, linear regression, and correlation.[62] Modern fundamental statistical courses for undergraduate students focus on correct examination selection, results estimation, and use of free statistics software.[61]

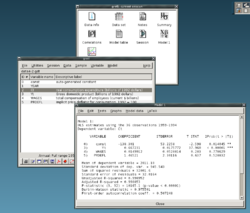

Statistical calculating [edit]

The rapid and sustained increases in calculating power starting from the second half of the 20th century have had a substantial impact on the exercise of statistical science. Early on statistical models were near always from the class of linear models, only powerful computers, coupled with suitable numerical algorithms, caused an increased interest in nonlinear models (such as neural networks) likewise as the creation of new types, such as generalized linear models and multilevel models.

Increased computing ability has as well led to the growing popularity of computationally intensive methods based on resampling, such as permutation tests and the bootstrap, while techniques such as Gibbs sampling have made use of Bayesian models more feasible. The computer revolution has implications for the time to come of statistics with a new emphasis on "experimental" and "empirical" statistics. A big number of both general and special purpose statistical software are now available. Examples of available software capable of complex statistical computation include programs such as Mathematica, SAS, SPSS, and R.

Business statistics [edit]

In business organization, "statistics" is a widely used direction- and conclusion support tool. It is especially practical in financial management, marketing management, and production, services and operations management .[63] [64] Statistics is also heavily used in management accounting and auditing. The discipline of Management Science formalizes the use of statistics, and other mathematics, in business. (Econometrics is the application of statistical methods to economic information in lodge to give empirical content to economic relationships.)

A typical "Business Statistics" course is intended for business organisation majors, and covers [65] descriptive statistics (collection, clarification, analysis, and summary of data), probability (typically the binomial and normal distributions), test of hypotheses and conviction intervals, linear regression, and correlation; (follow-on) courses may include forecasting, time series, determination trees, multiple linear regression, and other topics from business concern analytics more generally. Run into also Business mathematics § University level. Professional person certification programs, such every bit the CFA, often include topics in statistics.

Statistics applied to mathematics or the arts [edit]

Traditionally, statistics was concerned with cartoon inferences using a semi-standardized methodology that was "required learning" in virtually sciences.[ citation needed ] This tradition has changed with the use of statistics in non-inferential contexts. What was in one case considered a dry out subject, taken in many fields as a caste-requirement, is now viewed enthusiastically.[ according to whom? ] Initially derided by some mathematical purists, information technology is now considered essential methodology in certain areas.

- In number theory, scatter plots of data generated past a distribution office may be transformed with familiar tools used in statistics to reveal underlying patterns, which may and then pb to hypotheses.

- Predictive methods of statistics in forecasting combining anarchy theory and fractal geometry can be used to create video works.[66]

- The process art of Jackson Pollock relied on artistic experiments whereby underlying distributions in nature were artistically revealed.[67] With the advent of computers, statistical methods were applied to formalize such distribution-driven natural processes to make and clarify moving video art.[ citation needed ]

- Methods of statistics may be used predicatively in performance art, as in a card play a trick on based on a Markov process that only works some of the time, the occasion of which can exist predicted using statistical methodology.

- Statistics tin be used to predicatively create art, every bit in the statistical or stochastic music invented past Iannis Xenakis, where the music is functioning-specific. Though this type of artistry does non e'er come up out equally expected, it does bear in ways that are predictable and tunable using statistics.

Specialized disciplines [edit]

Statistical techniques are used in a wide range of types of scientific and social research, including: biostatistics, computational biology, computational sociology, network biology, social scientific discipline, sociology and social enquiry. Some fields of inquiry use applied statistics so extensively that they have specialized terminology. These disciplines include:

- Actuarial science (assesses gamble in the insurance and finance industries)

- Applied information economic science

- Astrostatistics (statistical evaluation of astronomical data)

- Biostatistics

- Chemometrics (for analysis of information from chemistry)

- Data mining (applying statistics and pattern recognition to discover knowledge from information)

- Information science

- Census (statistical study of populations)

- Econometrics (statistical analysis of economic data)

- Energy statistics

- Engineering statistics

- Epidemiology (statistical assay of illness)

- Geography and geographic information systems, specifically in spatial assay

- Epitome processing

- Jurimetrics (constabulary)

- Medical statistics

- Political science

- Psychological statistics

- Reliability applied science

- Social statistics

- Statistical mechanics

In addition, there are detail types of statistical assay that take as well developed their own specialised terminology and methodology:

- Bootstrap / jackknife resampling

- Multivariate statistics

- Statistical nomenclature

- Structured data analysis

- Structural equation modelling

- Survey methodology

- Survival analysis

- Statistics in various sports, particularly baseball – known as sabermetrics – and cricket

Statistics course a cardinal basis tool in concern and manufacturing equally well. Information technology is used to understand measurement systems variability, command processes (as in statistical process control or SPC), for summarizing data, and to make data-driven decisions. In these roles, it is a key tool, and perhaps the but reliable tool.[ commendation needed ]

See also [edit]

- Abundance estimation

- Glossary of probability and statistics

- List of academic statistical associations

- List of important publications in statistics

- Listing of national and international statistical services

- Listing of statistical packages (software)

- List of statistics articles

- Listing of university statistical consulting centers

- Notation in probability and statistics

- Statistics teaching

- Earth Statistics Mean solar day

- Foundations and major areas of statistics

- Foundations of statistics

- Listing of statisticians

- Official statistics

- Multivariate analysis of variance

References [edit]

- ^ "Statistics". Oxford Reference. Oxford University Press. January 2008. ISBN978-0-19-954145-4.

- ^ Romijn, Jan-Willem (2014). "Philosophy of statistics". Stanford Encyclopedia of Philosophy.

- ^ "Cambridge Dictionary".

- ^ Contrivance, Y. (2006) The Oxford Lexicon of Statistical Terms, Oxford University Press. ISBN 0-19-920613-nine

- ^ a b Lund Research Ltd. "Descriptive and Inferential Statistics". statistics.laerd.com. Retrieved 2014-03-23 .

- ^ "What Is the Difference Between Type I and Type II Hypothesis Testing Errors?". About.com Teaching . Retrieved 2015-xi-27 .

- ^ Moses, Lincoln E. (1986) Think and Explain with Statistics, Addison-Wesley, ISBN 978-0-201-15619-5. pp. i–iii

- ^ Hays, William Lee, (1973) Statistics for the Social Sciences, Holt, Rinehart and Winston, p.xii, ISBN 978-0-03-077945-nine

- ^ Moore, David (1992). "Teaching Statistics every bit a Respectable Subject field". In F. Gordon; S. Gordon (eds.). Statistics for the Twenty-Showtime Century. Washington, DC: The Mathematical Association of America. pp. 14–25. ISBN978-0-88385-078-7.

- ^ Chance, Beth L.; Rossman, Allan J. (2005). "Preface" (PDF). Investigating Statistical Concepts, Applications, and Methods. Duxbury Printing. ISBN978-0-495-05064-iii.

- ^ Lakshmikantham, ed. by D. Kannan, V. (2002). Handbook of stochastic analysis and applications. New York: G. Dekker. ISBN0824706609.

- ^ Schervish, Marking J. (1995). Theory of statistics (Corr. 2d print. ed.). New York: Springer. ISBN0387945466.

- ^ a b Broemeling, Lyle D. (1 November 2011). "An Account of Early Statistical Inference in Arab Cryptology". The American Statistician. 65 (4): 255–257. doi:10.1198/tas.2011.10191. S2CID 123537702.

- ^ Willcox, Walter (1938) "The Founder of Statistics". Review of the International Statistical Institute 5(4): 321–328. JSTOR 1400906

- ^ J. Franklin, The Science of Conjecture: Show and Probability before Pascal, Johns Hopkins Univ Pr 2002

- ^ Helen Mary Walker (1975). Studies in the history of statistical method. Arno Press. ISBN9780405066283.

- ^ Galton, F (1877). "Typical laws of heredity". Nature. xv (388): 492–553. Bibcode:1877Natur..xv..492.. doi:10.1038/015492a0.

- ^ Stigler, S.M. (1989). "Francis Galton'due south Account of the Invention of Correlation". Statistical Science. 4 (2): 73–79. doi:x.1214/ss/1177012580.

- ^ Pearson, G. (1900). "On the Criterion that a given System of Deviations from the Probable in the Case of a Correlated Organisation of Variables is such that it can be reasonably supposed to accept arisen from Random Sampling". Philosophical Magazine. Serial 5. 50 (302): 157–175. doi:10.1080/14786440009463897.

- ^ "Karl Pearson (1857–1936)". Department of Statistical Science – Academy Higher London. Archived from the original on 2008-09-25.

- ^ Fisher|1971|loc=Affiliate II. The Principles of Experimentation, Illustrated by a Psycho-concrete Experiment, Section viii. The Naught Hypothesis

- ^ OED quote: 1935 R.A. Fisher, The Design of Experiments ii. 19, "We may speak of this hypothesis as the 'cipher hypothesis', and the null hypothesis is never proved or established, but is possibly disproved, in the grade of experimentation."

- ^ Box, JF (Feb 1980). "R.A. Fisher and the Design of Experiments, 1922–1926". The American Statistician. 34 (1): 1–7. doi:ten.2307/2682986. JSTOR 2682986.

- ^ Yates, F (June 1964). "Sir Ronald Fisher and the Design of Experiments". Biometrics. 20 (2): 307–321. doi:10.2307/2528399. JSTOR 2528399.

- ^ Stanley, Julian C. (1966). "The Influence of Fisher'due south "The Design of Experiments" on Educational Research Thirty Years Later". American Educational Research Journal. 3 (3): 223–229. doi:x.3102/00028312003003223. JSTOR 1161806. S2CID 145725524.

- ^ Agresti, Alan; David B. Hichcock (2005). "Bayesian Inference for Chiselled Data Analysis" (PDF). Statistical Methods & Applications. 14 (iii): 298. doi:10.1007/s10260-005-0121-y. S2CID 18896230.

- ^ Edwards, A.W.F. (1998). "Natural Pick and the Sexual practice Ratio: Fisher'due south Sources". American Naturalist. 151 (half-dozen): 564–569. doi:ten.1086/286141. PMID 18811377. S2CID 40540426.

- ^ Fisher, R.A. (1915) The evolution of sexual preference. Eugenics Review (vii) 184:192

- ^ Fisher, R.A. (1930) The Genetical Theory of Natural Pick. ISBN 0-19-850440-iii

- ^ Edwards, A.W.F. (2000) Perspectives: Anecdotal, Historial and Critical Commentaries on Genetics. The Genetics Society of America (154) 1419:1426

- ^ Andersson, Malte (1994). Sexual Selection. Princeton University Press. ISBN0-691-00057-3.

- ^ Andersson, One thousand. and Simmons, L.W. (2006) Sexual selection and mate pick. Trends, Ecology and Evolution (21) 296:302

- ^ Gayon, J. (2010) Sexual selection: Some other Darwinian process. Comptes Rendus Biologies (333) 134:144

- ^ Neyman, J (1934). "On the two dissimilar aspects of the representative method: The method of stratified sampling and the method of purposive option". Journal of the Royal Statistical Social club. 97 (4): 557–625. doi:10.2307/2342192. JSTOR 2342192.

- ^ "Scientific discipline in a Circuitous World – Large Data: Opportunity or Threat?". Santa Iron Institute.

- ^ Freedman, D.A. (2005) Statistical Models: Theory and Practice, Cambridge University Press. ISBN 978-0-521-67105-seven

- ^ McCarney R, Warner J, Iliffe S, van Haselen R, Griffin M, Fisher P (2007). "The Hawthorne Effect: a randomised, controlled trial". BMC Med Res Methodol. 7 (one): 30. doi:10.1186/1471-2288-7-30. PMC1936999. PMID 17608932.

- ^ Rothman, Kenneth J; Greenland, Sander; Lash, Timothy, eds. (2008). "7". Modern Epidemiology (3rd ed.). Lippincott Williams & Wilkins. p. 100. ISBN9780781755641.

- ^ Mosteller, F.; Tukey, J.W (1977). Information analysis and regression. Boston: Addison-Wesley.

- ^ Nelder, J.A. (1990). The knowledge needed to computerise the analysis and interpretation of statistical information. In Expert systems and artificial intelligence: the demand for information about data. Library Clan Report, London, March, 23–27.

- ^ Chrisman, Nicholas R (1998). "Rethinking Levels of Measurement for Cartography". Cartography and Geographic Information Science. 25 (4): 231–242. doi:ten.1559/152304098782383043.

- ^ van den Berg, G. (1991). Choosing an analysis method. Leiden: DSWO Printing

- ^ Hand, D.J. (2004). Measurement theory and do: The earth through quantification. London: Arnold.

- ^ Mann, Prem Southward. (1995). Introductory Statistics (2nd ed.). Wiley. ISBN0-471-31009-3.

- ^ Upton, G., Cook, I. (2008) Oxford Dictionary of Statistics, OUP. ISBN 978-0-19-954145-iv.

- ^ a b Piazza Elio, Probabilità e Statistica, Esculapio 2007

- ^ Everitt, Brian (1998). The Cambridge Lexicon of Statistics. Cambridge, Uk New York: Cambridge Academy Printing. ISBN0521593468.

- ^ "Cohen (1994) The Earth Is Round (p < .05)". YourStatsGuru.com.

- ^ Rubin, Donald B.; Little, Roderick J.A., Statistical assay with missing data, New York: Wiley 2002

- ^ Ioannidis, J.P.A. (2005). "Why Most Published Research Findings Are False". PLOS Medicine. 2 (eight): e124. doi:10.1371/journal.pmed.0020124. PMC1182327. PMID 16060722.

- ^ a b c Huff, Darrell (1954) How to Prevarication with Statistics, WW Norton & Company, Inc. New York. ISBN 0-393-31072-8

- ^ Warne, R. Lazo; Ramos, T.; Ritter, N. (2012). "Statistical Methods Used in Gifted Teaching Journals, 2006–2010". Gifted Child Quarterly. 56 (3): 134–149. doi:10.1177/0016986212444122. S2CID 144168910.

- ^ a b Drennan, Robert D. (2008). "Statistics in archæology". In Pearsall, Deborah M. (ed.). Encyclopedia of Archaeology . Elsevier Inc. pp. 2093–2100. ISBN978-0-12-373962-nine.

- ^ a b Cohen, Jerome B. (December 1938). "Misuse of Statistics". Periodical of the American Statistical Clan. JSTOR. 33 (204): 657–674. doi:10.1080/01621459.1938.10502344.

- ^ Freund, J.E. (1988). "Modern Simple Statistics". Credo Reference.

- ^ Huff, Darrell; Irving Geis (1954). How to Prevarication with Statistics. New York: Norton.

The dependability of a sample tin can be destroyed past [bias]... allow yourself some degree of skepticism.

- ^ Nelder, John A. (1999). "From Statistics to Statistical Science". Periodical of the Purple Statistical Society. Series D (The Statistician). 48 (2): 257–269. doi:10.1111/1467-9884.00187. ISSN 0039-0526. JSTOR 2681191.

- ^ Nikoletseas, Yard.M. (2014) "Statistics: Concepts and Examples." ISBN 978-1500815684

- ^ Anderson, D.R.; Sweeney, D.J.; Williams, T.A. (1994) Introduction to Statistics: Concepts and Applications, pp. five–9. Due west Group. ISBN 978-0-314-03309-3

- ^ "Journal of Business & Economical Statistics". Journal of Business organisation & Economic Statistics. Taylor & Francis. Retrieved sixteen March 2020.

- ^ a b Natalia Loaiza Velásquez, María Isabel González Lutz & Julián Monge-Nájera (2011). "Which statistics should tropical biologists learn?" (PDF). Revista Biología Tropical. 59: 983–992.

- ^ Pekoz, Erol (2009). The Manager'south Guide to Statistics. Erol Pekoz. ISBN9780979570438.

- ^ "Aims and telescopic". Journal of Business & Economic Statistics. Taylor & Francis. Retrieved 16 March 2020.

- ^ "Journal of Business organisation & Economic Statistics". Journal of Business & Economic Statistics. Taylor & Francis. Retrieved 16 March 2020.

- ^ Numerous texts are available, reflecting the scope and achieve of the subject field in the concern world:

- Sharpe, N. (2014). Business organisation Statistics, Pearson. ISBN 978-0134705217

- Wegner, T. (2010). Applied Business organization Statistics: Methods and Excel-Based Applications, Juta Academic. ISBN 0702172863

- Holmes, L., Illowsky, B., Dean, S (2017). Introductory Business Statistics

- Nica, G. (2013). Principles of Business organization Statistics

- ^ Cline, Graysen (2019). Nonparametric Statistical Methods Using R. EDTECH. ISBN978-1-83947-325-eight. OCLC 1132348139.

- ^ Palacios, Bernardo; Rosario, Alfonso; Wilhelmus, Monica One thousand.; Zetina, Sandra; Zenit, Roberto (2019-x-30). "Pollock avoided hydrodynamic instabilities to paint with his dripping technique". PLOS ONE. 14 (10): e0223706. Bibcode:2019PLoSO..1423706P. doi:ten.1371/periodical.pone.0223706. ISSN 1932-6203. PMC6821064. PMID 31665191.

Further reading [edit]

- Lydia Denworth, "A Significant Problem: Standard scientific methods are under fire. Will anything change?", Scientific American, vol. 321, no. 4 (October 2019), pp. 62–67. "The employ of p values for nearly a century [since 1925] to make up one's mind statistical significance of experimental results has contributed to an illusion of certainty and [to] reproducibility crises in many scientific fields. There is growing determination to reform statistical assay... Some [researchers] suggest changing statistical methods, whereas others would exercise away with a threshold for defining "significant" results." (p. 63.)

- Barbara Illowsky; Susan Dean (2014). Introductory Statistics. OpenStax CNX. ISBN9781938168208.

- Stockburger, David W. "Introductory Statistics: Concepts, Models, and Applications". Missouri Land University (3rd Web ed.). Archived from the original on 28 May 2020.

- OpenIntro Statistics Archived 2019-06-16 at the Wayback Auto, tertiary edition by Diez, Barr, and Cetinkaya-Rundel

- Stephen Jones, 2010. Statistics in Psychology: Explanations without Equations. Palgrave Macmillan. ISBN 9781137282392.

- Cohen, J (1990). "Things I accept learned (so far)" (PDF). American Psychologist. 45 (12): 1304–1312. doi:10.1037/0003-066x.45.12.1304. Archived from the original (PDF) on 2017-ten-xviii.

- Gigerenzer, G (2004). "Mindless statistics". Periodical of Socio-Economics. 33 (5): 587–606. doi:10.1016/j.socec.2004.09.033.

- Ioannidis, J.P.A. (2005). "Why most published research findings are false". PLOS Medicine. 2 (four): 696–701. doi:ten.1371/journal.pmed.0040168. PMC1855693. PMID 17456002.

External links [edit]

- (Electronic Version): TIBCO Software Inc. (2020). Data Science Textbook.

- Online Statistics Education: An Interactive Multimedia Course of Study. Developed by Rice University (Pb Developer), Academy of Houston Clear Lake, Tufts University, and National Scientific discipline Foundation.

- UCLA Statistical Computing Resources

- Philosophy of Statistics from the Stanford Encyclopedia of Philosophy

Source: https://en.wikipedia.org/wiki/Statistics

0 Response to "A Family of Objects That All Understand and Respond to the Same Methods Is Defined by a:"

Post a Comment